Blazing Fast "On The Fly" Image Transformation with AWS Lambda and Sharp

This article will show you how to build a Serverless image transformation REST service using Lambda, S3 and API Gateway. Many consideration went into designing this stack and in this article I will walk you through my design choices.

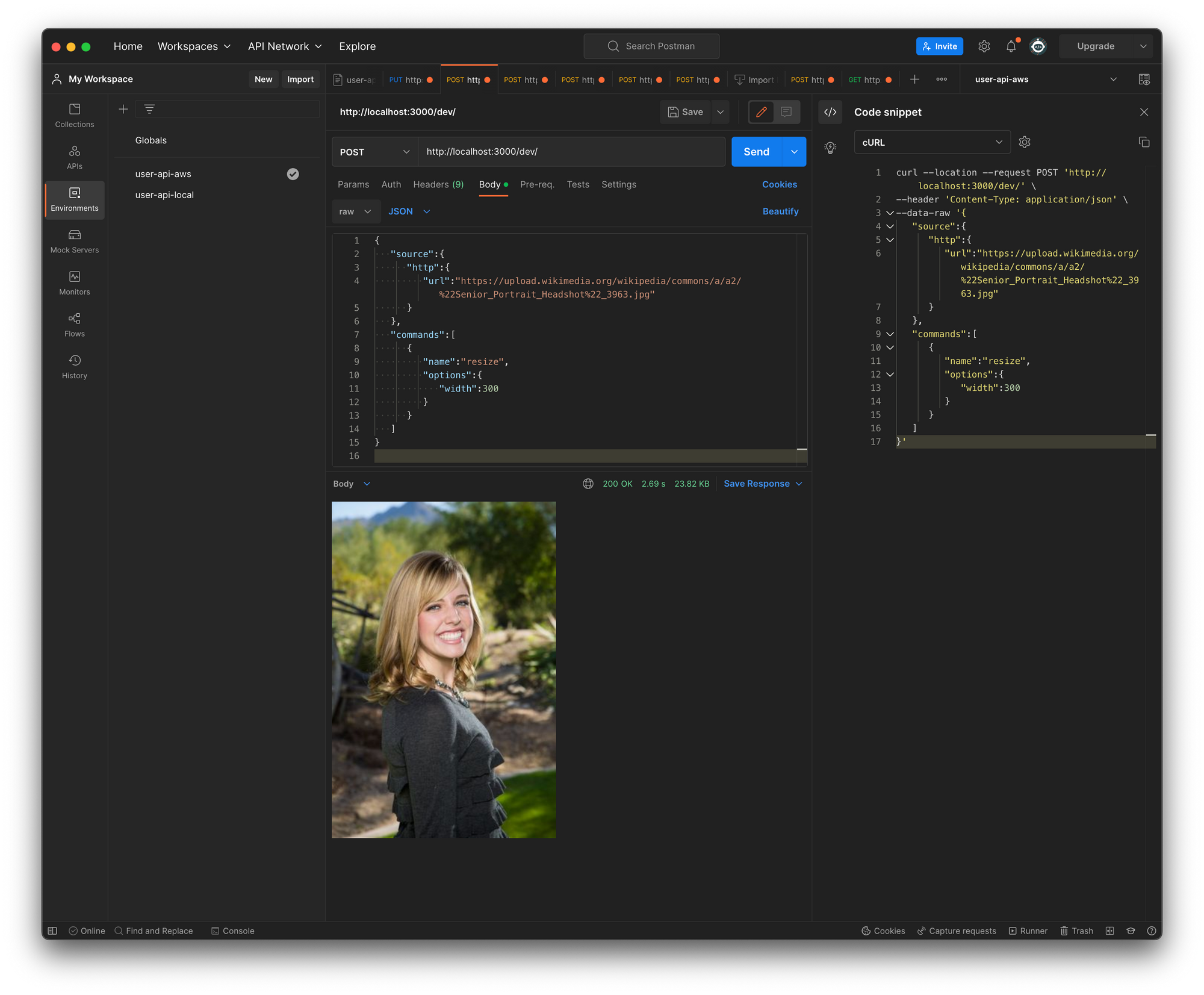

Apply rich and chain-able image transformations with a simple REST interface

Apply complex image transformations using composable commands:

curl --location --request POST 'https://xxxxxxxxxx.execute-api.ap-southeast-2.amazonaws.com' \

--header 'Content-Type: application/json' \

--data-raw '{

"source":{

"http":{

"url":"https://example.com/image.jpg"

}

},

"commands":[

{

"name":"resize",

"options":{

"width":150,

}

},

{

"name":"shapen",

"options":5

}

]

}

'The plan

When I planned on building a Serverless image transformation service, I researched other solutions, paid and free ones, and based on what I gleaned, I drew up below requirements:

- Must be dead-simple to configure, deploy and integrate with existing apps

- Zero manual configuration and "one click" deployment

- Transformations must be composable to allow any mix of filters and their respective order in a single API call

- Must support file sizes larger than the current limits of AWS Lambda (6mb) and API Gateway (10mb)

- Must have an agnostic API interface to qualify as a generic and re-usable web component

- Must have a rich interface for image processing including method-chaining and seamless conversion between various image formats e.g

jpg,pngandgif - Must be fast enough to apply common transformation jobs within the lifespan of a Lambda function using async invocation

Hard AWS limitations

Everything in life comes with limitations, and AWS is no different. For this build, two issues stand out like a sore thumb:

- API Gateway has a 10Mb payload limit

- API Gateway maximum integration timeout 30 seconds

- AWS Lambda has a 6Mb payload limit

This will severely limit the usefulness of an image upload & processing service. It is not uncommon for my Google Pixel to produce photos larger than 6mb and what if the bitmap image transformations exceed 30 seconds?

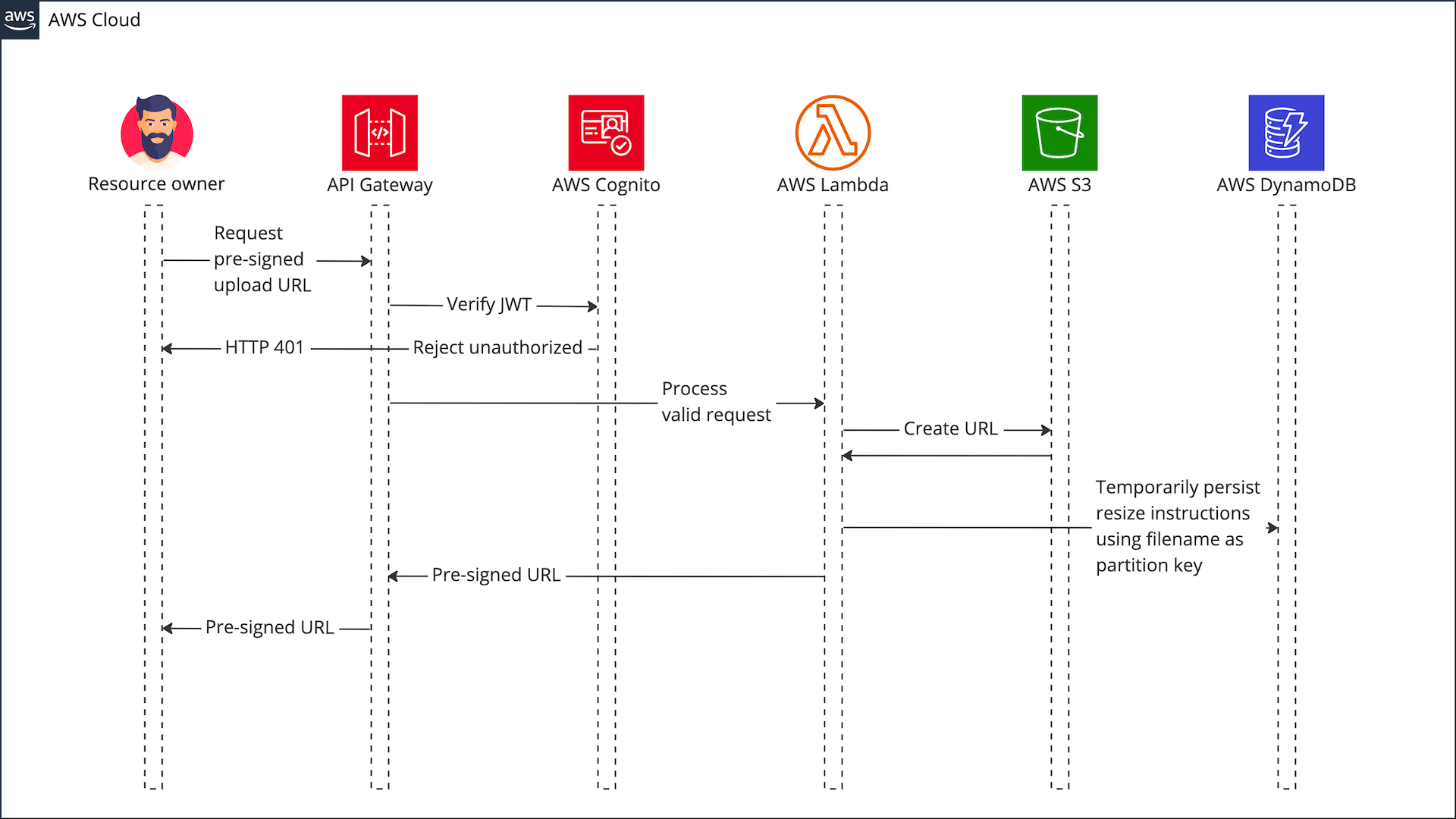

Upload without file size restrictions

The file upload restrictions can be overcome by generating a pre-signed upload URL which is streaming the file directly to S3.

This however, comes at a cost:

- The user has to make an extra call to obtain the pre-signed S3 upload URL

- Increased complexity because the images upload to S3 and the user submitted image processing instructions are 100% decoupled

This is how you create a pre-signed upload URL using @AWS-SDK

export const getPreSignedUploadUrl = async (

filename: string,

folder = '/temp',

): Promise<string> => {

const input: PutObjectCommandInput = {

Bucket: process.env.IMAGE_BUCKET_NAME,

Key: `${folder}/${filename}`,

ACL: 'public-read',

};

const command: PutObjectCommand = new PutObjectCommand(input);

const signedUrl = await getSignedUrl(s3Client, command, {

expiresIn: 3600,

});

return signedUrl;

};Sequence diagram for obtaining the upload URL for an authenticated user